Papers

Conference Proceedings

Feature Articles

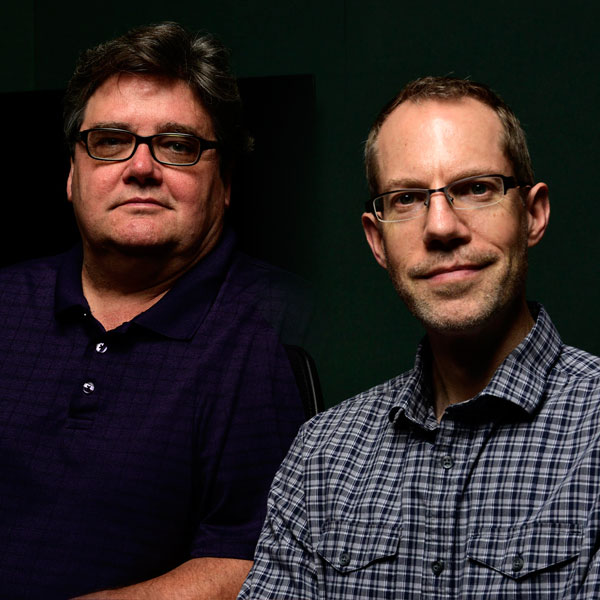

This month Francis Rumsey interviews Steven Fenton about his paper on the perceptual modelling of “punch,” published in the June AES Journal.

AUGUST 2019

SEPTEMBER 2019